The Missing Layer in Agent Code Review

A Reasoning Trace for Every Diff

Introduction

A decade ago I was an early engineer on a low code, no code platform at AWS. The UX was simple, but the backend was a complicated distributed system, and one of its services maintained an immutable log of every command processed, which we used as a source of truth for debugging and replay.

I’ve been thinking about that log a lot as I watch coding agents generate more of the code that ends up in production. There is a messy process that turns an initial prompt into a code diff. Multiple turns, context pulled in through tool calls, corrections from the developer along the way. Eventually a diff lands in a PR. In most cases, nothing about the reasoning that produced it lands with it.

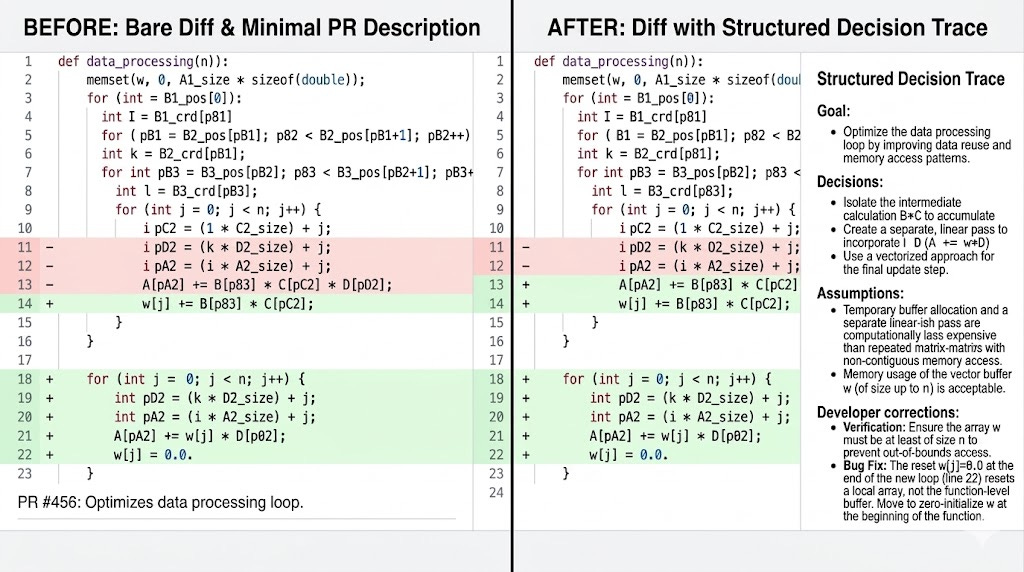

The industry is racing to fix the wrong half of this. Most “agent memory” work is about making the agent smarter on the next task. What’s missing is an artifact for this task: a structured record of the decisions behind the diff, shipped with the code, so a reviewer, whether agent or human, doesn’t have to reconstruct intent from scratch before approving a merge.

That artifact isn’t an immutable command log. It’s something else, and that’s what this post is about.

The Decision Tracker

Coding agents don’t need a heavier memory layer. They need a structured record of the reasoning behind each diff, attached to the diff itself. However, most of the industry is focused on building memory layers instead. At AI Dev Conf last week, vendor after vendor pitched memory products: persistent context stores, cross-session recall, retrieval layers tuned for agents. Memory layers make sense for domain agents that need to remember the user across sessions. Coding agents don’t have that requirement.

Long-term project context already has a home. It belongs in the repo, as version-controlled markdown that the whole team and every agent can read: AGENTS.md, ADRs, design docs, contributing guides. That’s context-as-code, and it scales because it lives where the code lives. What doesn’t have a home is the per-session reasoning that produced a specific change. That’s the gap.

Why does this gap matter more for coding agents than for, say, a chatbot? Because code is a shared artifact. The reviewer is not the same person as the prompter. A teammate, an on-call engineer, a reviewer agent, or you yourself in six months will need to understand why this diff exists. The binding constraint isn’t how smart the agent is on the next task. It’s whether this task is legible to whoever reads it next.

A decision tracker is the artifact that makes it legible. It’s a structured log, extracted from the session that produced the diff, and submitted as part of the commit. It captures:

the goal that triggered the change

decisions made and the alternatives considered

assumptions about the codebase, requirements, or constraints

developer corrections that redirected the agent

context loaded from documentation or files

external tool calls such as linting or testing

Here’s a sample decision tracker:

Decision Trace: PR #4023

Goal: [What task/feature/fix triggered this change]

Decisions:

1. [Decision] — chose [X] over [Y] because [rationale]

2. [Decision] — chose [X] because [rationale]

Assumptions:

- [Any assumptions made about the codebase, requirements, or constraints]

Risk areas:

- [Files/logic where the change could have unintended side effects]

What Decision Trace Buys You

Three things change once the reasoning ships with the diff. Reviews get faster because the reviewer no longer has to reverse engineer the agent’s logic. Incident response gets easier because the on-call engineer can see why a change was made, not just what changed. And in regulated industries, the trace itself becomes a compliance artifact. Incident response is where this matters most. When something breaks in production, the first job is to rollback.

The second is to figure out which change caused it and why. Today, that usually means a lot of back and forth on Slack and in tickets to piece together the context. With a decision trace attached to each diff, the on-call engineer can read why a change was made, not just what changed, and get to the root cause faster.

The third benefit shows up in regulated industries. A few years back, I spoke to a software engineer who built software for medical devices. His team had a strict rule: no more than a hundred lines of code per developer per day, because humans lose focus over long stretches and start making mistakes. The interesting question is what happens when an agent writes the code instead. The agent doesn’t get tired, but the reviewer still does, and the regulator still needs evidence that a human was in the loop. A decision trace is that evidence. It shows what the agent decided, what the human saw, and what the human approved. In an industry where someone is liable for the outcome, that record is the difference between a defensible process and a guess.

Implementation

The hard part is making this non-intrusive. The developer works with the agent on a feature or a fix. When the change is ready to commit, the agent should generate the decision trace from the session and add it to the commit description. No extra steps for the developer.

There are two ways to set this up. The first is to put the extraction instructions and the trace template in AGENTS.md or CLAUDE.md, so the agent picks them up at session start. The second is to write a Skill that the agent invokes when the developer commits. The Skill option is more reliable as instructions in markdown files get followed inconsistently. A Skill runs every time.

Today, the realistic approach is a single LLM call at commit time that summarizes the session. This works, but it has a known weakness. An agent narrating its own reasoning after the fact can confabulate or sanitize. The better long term design is to capture signals during the session as decisions are made, then assemble the trace from those signals at commit. That requires infrastructure that does not exist yet in most coding agents. Until it does, the LLM extraction approach is what is shippable.

One more design choice worth calling out. It is tempting to store the traces in the same repo as a separate folder. The commit description is already version controlled, already attached to the diff, and already visible in every git tool a reviewer uses. A separate file duplicates state and creates drift. Keep the trace in the commit message where it belongs.

Conclusion

The hardest problem with AI generated code is not making the agent smarter on the next task. It is making the current task reviewable. Long term project context already has a home in the repo. Per session reasoning does not, and that is the gap a decision trace fills. The pattern goes beyond code. Anywhere a human reviewer needs to understand why an AI made a recommendation, and anywhere there is liability for the outcome, the same accountability layer applies. Banking, healthcare, legal, and any other domain where the AI is one signature away from a real consequence will need this. Coding is just where the problem is most visible today, because the artifact is shared and the reviewer is rarely the same person who wrote the prompt. Build the audit layer for code now, and the pattern will be ready for everywhere it shows up next.